UX Legal Agent

My Role

This project is research-driven and grounded in domain-specific insight. I designed the system based on real-world legal complexity: interviewing legal professionals, analyzing actual workflows, and mapping the criteria used in EU trademark disputes. I was responsible for translating these insights into a functional and ethically grounded prototype.

The prototype was grounded in principles of Human-Centered AI. This meant designing the system so users could clearly see how the AI reached its conclusions through structured reasoning, references to case law, and visual argumentation. By asking users to make their own prediction before the AI presents its output, the system encourages reflection and critical thinking, helping users better understand both their own reasoning and the AI’s logic. When the AI and human reach different conclusions, it becomes a learning moment, not a failure.

Why?

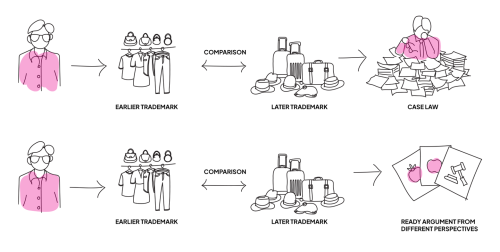

This illustration shows the current legal workflow with the proposed AI-supported approach.

The top row shows how trademark disputes are handled today: legal professionals manually compare goods and services across categories, often facing an overwhelming amount of case law to read and interpret in order to identify earlier decisions that might support their argument or point of view.

The bottom row presents the legal expert, now supported by an AI agent that analyzes large volumes of case law and Canon-criteria reasoning. It delivers three structured outputs: an argument from the opponent, one from the applicant, and the likely decision from the EUIPO. Crucially, the AI makes its thought process visible, allowing the human expert to judge whether the reasoning holds, keeping the lawyer in control.

It’s a shift from cognitive overload to clarity from ”what is” to ”what ought to be.”

The prototype

The process begins with the user entering two trademarks to compare by pasting in the application numbers and selecting the relevant class. The system then retrieves data and begins the analysis.

The AI generates three clearly separated outputs: one argument from the opponent’s perspective, one from the applicant’s side, and a probable decision based on EUIPO practice. Each section includes links that let the user explore where the reasoning comes from including references to case law and the specific Canon-criteria being applied.

The goal is not just to deliver answers, but to make the AI’s thought process visible so the human expert remains in control. A feedback feature lets users respond to individual arguments, which in turn improves the system over time. The feedback loop. The layout is clean, readable, and deliberately minimal to reduce cognitive load, focusing attention on critical legal distinctions.

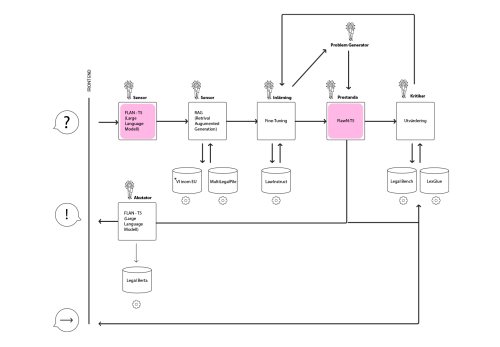

The diagram

This is a diagram that illustrates how the system works behind the scenes.

The system uses Retrieval-Augmented Generation (RAG) to combine the power of large language models with targeted legal data from sources like MultiLegalPile and LawInstruct. The AI model, FLawN-T5, is fine-tuned for multilingual legal reasoning and trained on instruction-based legal tasks.

The result is not a black-box automation, but a transparent, explainable legal assistant. Aligned with Human-Centered AI principles, it places the user in control, makes the AI’s reasoning visible, and encourages informed, ethical decision-making. The system is built not just to deliver conclusions but to invite questioning and deeper understanding.